0

1

2

3 --> ttH

4 --> ttM

5 --> ttL

6 --> tac1

7 --> tac2

.

.

in the event header and contains the the register bits as:

ttL ... bits: 15 <-- 00

ttM ... bits: 30 <-- 16 bit 31 is zero!!

ttH ... bits: 31 <-- 46

Notice the anomaly of ttM: The highest bit is not the 31'th bit

clock bit as one would

expect (and this bit should always be zero).

Thus the time of the usec clock can be expressed as:

tu = ttl + (2^16)xttM + (2^31)xttH [usec]

(notice that we have 2^31 rather than 2^32 because of the

ttM anomaly)

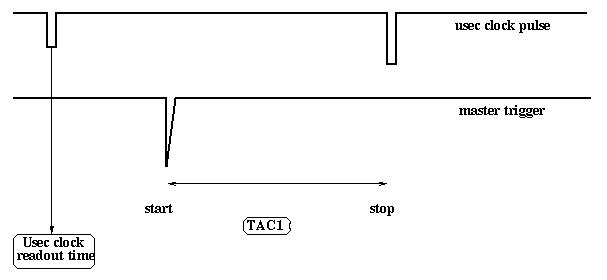

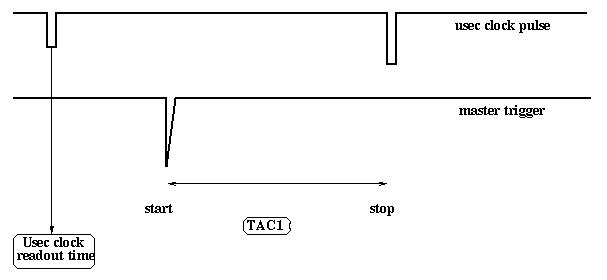

The usec clock registers are latched at the main trigger time (which is a fixed time after the pre-trigger, typically 600nsec). Tac1 is started at the main trigger time and stopped at the next usec clock tick:

Thus, adding the tac1 information we can determine the master trigger time to an accuracy of better than a nsec:

tM= tu x 1000 - tac1/4 [nsec]

-- again to some arbitrary starting time. The calibration of the tac1 is

supposed to be 0.25 nsec/ch; but I don't think it has been measured.

Since the time resolution of the germanium detectors are of the order of 8-10 nsec, tM will have at least the same uncertainty.

The 47bit clock is reset every time the master VXI crate (#7) is rebooted -- or 4.46 years, whatever comes first.